Thamil computing has made a lot of progress in the past 10-20 years. Much of the work that has reached the public has been in the areas of fonts/rendering and input methods. Thanks to the continuing efforts in these areas, most of those issues have been solved, Thamil text has standardized on a single character set (Unicode), and we have nice fonts and input methods for major operating systems and mobile devices. The new environment has enabled the widespread creation and consumption of digital content in Thamil.

Now, the next set of problems to solve are handling Thamil text that is written using the Unicode character set. Unicode is designed for all languages’ fonts to standardize, but the slight cost to Thamil language processing has been its complexity. But the challenges can be handled easily by representing the data in a suitable data structure, which in this case is a prefix tree (or “trie”).

Before using Unicode, the issues related to fonts for Thamil computing were many. First of all, fonts needed to be created. Many of the original fonts also used their own character sets (mappings of codepoint to letter shapes), meaning that the fonts were often not interchangeable. Even ensuring that text in a particular font could render with that font was not easy, and it is a task that continues to be needed to be done for each new computing platform.

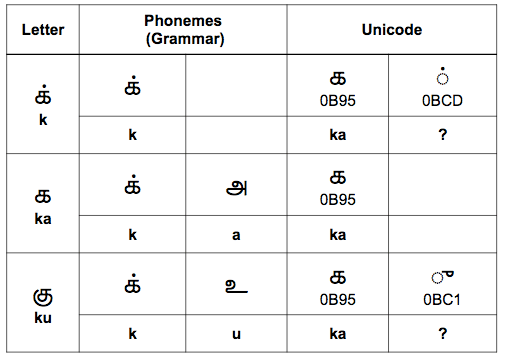

The main issue of the Unicode Thamil language specification is that the codepoints are based on how you write, not on the phonemes (sounds) of the language on which the grammar is also based. Also, many letters of the language must be constructed by the combination of more than one codepoint, and this results in the codepoint sequence of some letters being a prefix of other letters’ character sequences. The problems of the design of the specification may be due to the fact that it wasn’t decided by Thamil people. Since the designs for the Unicode specifications for many other languages in South Asia / India were done similarly by the same Indian government body, this same problem likely exists in those languages, too. See my Clojure/West 2015 talk (slides here) for a brief explanation of the problem. The following image is based on a presentation slide:

A prefix tree (trie) is used to represent several strings in an efficient way when the strings may share common prefixes with each other. In the trie, a string (a sequence of characters/codepoints) is represented in the tree as a path from the root to a leaf node, with each character represented as a node in the tree. If you need to store all of the words from a language dictionary, encyclopedia, or corpus of documents to be searched, the trie can be applied and reduce overall storage requirements.

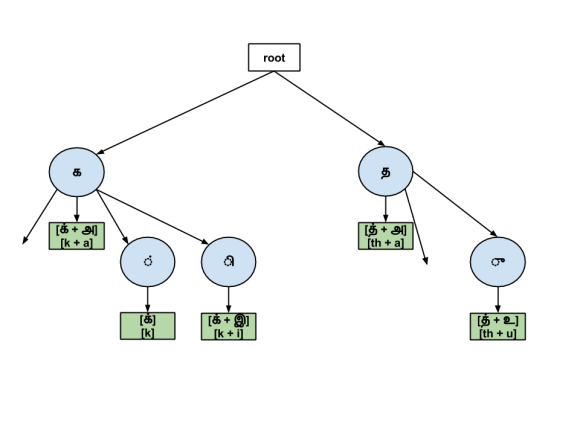

Although the scale of storage is much different, the prefix property is true for the sequences representing each Thamil letter in the Unicode encoding. The trie gives us the ability to look up the existence of a string, like a set. We can use this for splitting up a string of text into strings representing each letter. Such a tree might look like this:

The input to building the tree is a collection of strings. For every string in the collection, we traverse the characters in the string in order to find the largest prefix that we can build that is shared with some other string in the trie. That largest prefix determines where we insert the string into the trie.

We perform a similar operation when we split a string of Thamil text into constituent letter strings. We add characters from the input string to build up the largest string that we can that exists in the trie. We then remove those characters from consideration from the input and repeat the process. If we start with the input string “பட்டை”, the Unicode characters contained in the string, in order, are ‘ப’, ‘ட’ ‘்’ ‘ட’ ‘ை’.

| active characters | new char | largest string found? | new active chars |

|---|---|---|---|

| ப | |||

| ப | ட | ப | ட |

| ட | ் | ட் | |

| ட் | ட | ட் | ட |

| ட | ை | டை | |

| டை | டை |

Through this process, we can unambiguously parse our input string into individual strings that correspond to the alphabet that we store in the trie. For any element in our alphabet, it does not matter how many characters long its string representation is. Because we have chosen an appropriate data structure for our problem domain, our choice of how to implement the string parser is independent of which values are stored in the data structure. As a result, it seems very likely we can just as easily use the same trie code that handles Thamil text in order to deal with the text in other South Asian or South East Asian languages, given that Singalese and South East Asian writing scripts derive from the Thamil Pallava kingdom’s script, and there is a similarity in the scripts of South and North India.

At this point, it is worth addressing the point that, at least for Thamil, there are possibly easier ways to parse input text into individual Thamil letters. For example, all vowels only require one character, and all other letters are either consonants or consonant+vowel combinations. For a given consonant, all of the strings for that consonant and consonant+vowel combinations start with the same base character, and there is optionally 1 single character that might follow. Assuming that you are not the end of your input, you can look ahead to the next character or else maintain state of the current character as you move forward.

However, it is important to remember that easy and simple are two different things, and even this example shows how those concepts can differ. When an approach that is each to understand and/or implement, we use a relative measure to make that assessment. It is important to strive for code and solutions that are simple, that is to say code whose functions and functional units are as “standalone” (stateless and void of intertwined dependencies) as possible. Simpler code leads to more maintainable and extensible code over the long-term. Specific to the topic of South/SE Asian alphabets (abugidas), we can arrive at simpler code by correctly identifying and using prefix trees.

After implementing a trie, I modified the prefix tree that stores the strings of the elements of the alphabet so that each string can be associated to a value. Having a value associated to each string allows me to implement operations like conversion. One type of conversion is to convert each letter into its constituent phonemes. For Thamil, each letter of the alphabet corresponds to either 1 or 2 phonemes. (Confusingly, keep in mind that this point is completely independent of the fact that a Thamil letter is represented in Unicode by either 1 or 2 code points.) Once you can convert a string of Thamil text into a sequence of its phonemes, you can use the inverse the association (which is an association of a sequence of 1 or 2 phonemes to a letter) to convert text into phonemes, apply transformations at the phoneme level, and convert the resulting phonemes back into proper text again. Tries can also be used to convert various pre-Unicode character encodings to Unicode and vice versa. The following image shows a trie that can be used to split Thamil text into its constituent phonemes:

As I mentioned in my presentation, we all (myself included) should investigate how SE Asian languages (Thai is a good example) handle script handling. That knowledge will help in processing Thamil text (and also that of other South Asian languages), not least because SE Asian language scripts derive from a Thamil one that the Pallavas made based on the Thamil script of the time (1st millennium CE). What makes Thai an interesting language to investigate is that text does not include any spaces between words. (This is how ancient Tamil inscriptions were carved and literature transcribed on palm leaves. This is also how Latin was transcribed as recently as the illuminated manuscripts of the Western Medieval Period.) The level of complexity in parsing individual words from a totally conjoined text would likely require clever, useful solutions.

Similarly, I think that Thamil computing enthusiasts could benefit by spending some time learning at least the basics of Korean and Japanese phonetics. The connection between ancient Japanese and ancient Tamil is comprehensive and is in multiple ways undeniably not just a coincidence. Korean and Japanese are already understood to be related, so transitively, Korean should have an affinity to Thamil. Indeed, the Korean alphabet was created to cleanly represent its simple set of phonemes, and each Korean letter represents either 1, 2 or 3 phonemes. Doing natural language processing of Korean text (ex: processing a Korean internet search query) begins by first by decomposing the query text and target/corpus text into phonemes. And doing Thamil NLP should be no different, really. The suffixes through which Thamil attaches additional grammatical meaning (விகுதி) are added to words using an arithmetic that is based on phonemes. For example, I wrote code to handle basic rules for suffix addition that is essentially the computer code representation of general rules for adding suffixes (சந்தி). Whereas string search algorithms may be presented in English, where there is a 1-to-1 correspondence of alphabetic character and character set codepoint, perhaps the bare minimum work required to adapt such algorithms to a language like Thamil requires decomposing text into the language’s phonemes. For simple algorithms — for lack of a better example, take as an example global and local string/sequence alignment and apply it for natural-language searching and typo correction — I can imagine that using this algorithm with Thamil phonemes would work okay. For more complex algorithms, modification of the default (English-language) algorithm might require extra modification of the data structures involved to handle the fact that Thamil letters contain more than one phoneme. Determining what works and how will require investigation. I hope that all of the above ideas give those who are dedicated to improving Thamil computing ideas for focused, practical research that will help improve their efforts, and by extension, all of our efforts. I am excited to see all of the advancements that we all will achieve in the future together.

2 replies on “Using Prefix Trees for Thamil Language Processing”

[…] href=”http://www.elangocheran.com/blog/2015/11/using-prefix-trees-for-thamil-language-processing/thamil-un…; rel=”attachment wp-att-478″><img […]

[…] for 2017 in Toronto, Ontario, Canada just concluded. I presented a more in-depth explanation of my previous post on prefix trees along with specific examples of how I have used […]